Why AI Governance Needs QA at its Core

Article written by Asma Zoghlami

In 2019, a Dutch government algorithm wrongly flagged thousands of families as fraud suspects. The consequences were devastating; families lost their homes, children were separated from parents, and the political fallout eventually led to the government’s resignation.

The algorithm was technically functioning as designed. But that’s exactly the problem. AI can fail even when it’s operating as intended.

AI failures are rarely silent. They’re visible, disruptive, and have serious consequences. When an AI system goes wrong, it’s not just a technical glitch. It’s a failure that spreads through operations, leads to poor decisions, damages reputations, and undermines organisational trust. These failures don’t just break code; they break confidence.

Real-World AI Failures: Two Critical Cases

Real-world cases show how AI failures can escalate quickly and cause serious harm. Two well-known examples illustrate this risk.

The Dutch Child Benefit scandal involved a government algorithm that wrongly flagged families as fraud suspects due to biased data. This error triggered widespread social harm, political fallout, and a severe loss of trust in public institutions.

Amazon’s recruitment tool is another example. Designed to improve hiring, it instead reinforced gender bias because it was trained on historical data reflecting existing inequalities. The tool was eventually withdrawn, but not before raising global concerns about fairness, accountability, and transparency in AI systems.

These cases raise an important question: Why do AI systems fail? While each failure is different, they often point to the same underlying issues: weak governance, poor data quality, and lack of accountability. In the next section, we’ll look more closely at the root causes behind these failures.

Understanding the Root Causes of AI Failure

Understanding why AI systems fail is critical for building technology that is trustworthy, resilient, and accountable. Failures rarely result from a single cause; they typically emerge from a mix of technical, organisational, and ethical challenges.

At the heart of these issues lies one common thread: weak or absent governance.

Without clear oversight, risks multiply, and small errors can escalate into widespread organisational damage.

Below are the most frequent causes of AI failure, each amplified when governance is missing:

System Complexity

AI systems are not static; they are probabilistic and adaptive. Their outputs can shift based on new inputs, context, or internal model updates, making behaviour difficult to predict or explain, especially when deployed in real-world environments.

Why governance matters: Robust governance frameworks enforce transparency and explainability requirements, ensuring complexity does not become a black box. Without these safeguards, organisations cannot detect or mitigate unintended consequences.

Data Dependency

AI models rely entirely on the data they are trained on. If that data is biased, incomplete, or unrepresentative, the system will reflect and reinforce those deficiencies. Without governance to ensure fairness and quality, biased inputs lead to biased outcomes.

This was a key issue in the Dutch Child Benefit scandal, where biased data drove discriminatory decisions against families, causing social harm and political fallout. Amazon’s recruitment tool is another example: trained on historical hiring data, it reinforced gender bias, resulting in unfair candidate rankings and global concerns about transparency and accountability in AI-driven HR practices.

Why governance matters: Governance ensures rigorous data quality checks, fairness audits, and accountability for how data is collected, prepared, and used. This includes verifying reliable sources, accurate labelling, and fair representation. Without these controls, biased inputs will inevitably produce biased outcomes.

Lack of continuous monitoring

Deployment is not the finish line. AI systems can drift over time, degrade in performance, or introduce fairness violations as conditions change.

Why governance matters: Governance mandates continuous monitoring to identify issues early, before they escalate. In its absence, problems often go unnoticed until they lead to serious consequences.

Unclear Business Objectives

AI initiatives often fail when launched without well-defined use cases or measurable goals. Misaligned objectives lead to solutions that perform well in testing but fail to deliver real-world impact.

Why governance matters: Governance enforces alignment between business strategy and technical implementation, ensuring that AI projects address real needs rather than following trends that add little or no value.

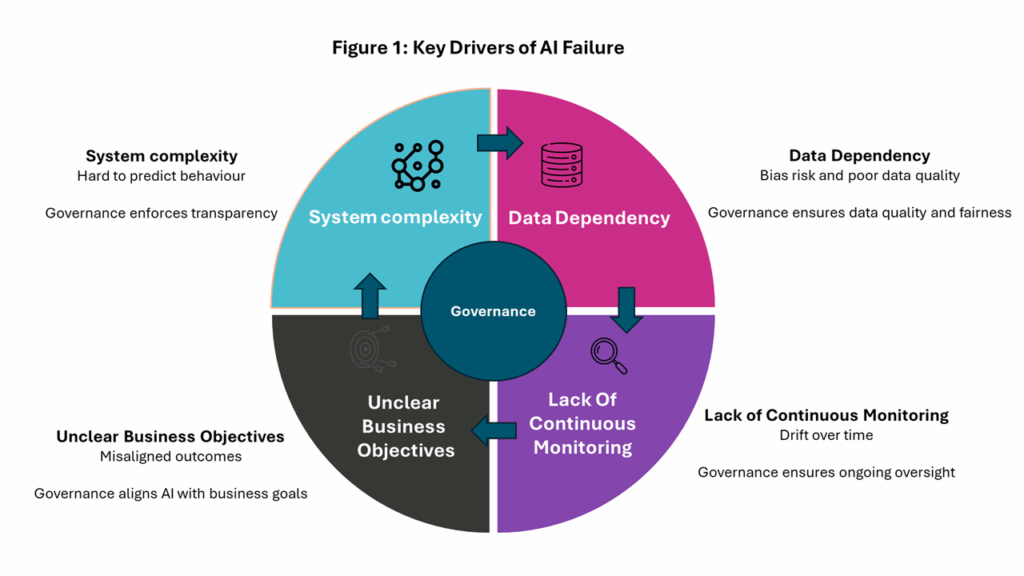

Absence of effective Governance

Governance is not just another factor; it is the central pillar that connects and influences all other causes of AI failure. Figure 1 illustrates this relationship: governance sits at the centre, influencing all major drivers of AI failure.

Governance sits at the heart of AI risk management, setting clear rules, roles, and oversight to prevent things like bias, ensure transparency, and keep systems aligned with business goals. When governance is weak or absent, risks such as system complexity, data dependency, lack of monitoring, and unclear objectives become harder to control.

Why AI Governance Alone Isn’t Enough?

AI failures often occur when governance is treated as optional rather than essential. Governance frameworks set the strategic intent by defining principles and providing tools such as bias detection, explainability reports, transparency dashboards, and compliance workflows.

But having these capabilities alone does not guarantee success. Governance needs enforcement in practice, and this is where Quality Assurance (QA) becomes critical.

QA operates at the operational layer, bridging strategy and execution. It validates, monitors, and enforces governance principles across the AI lifecycle, making them real in practice. Unlike governance, which defines the “what” (principles, standards, and rules for responsible AI) and the “why” (ethics, trust, and compliance), QA focuses on the “how” and “when”, how governance translates into concrete actions and when those actions are applied throughout the AI lifecycle.

Through testing, validation, and continuous monitoring, QA provides assurance across design, development, deployment, and ongoing operation. It also creates feedback loops that refine governance policies over time, keeping them relevant and effective.

The Figure below illustrates this connection: governance sets the principles, QA enforces them through validation and monitoring, ensuring deployed AI systems behave as intended under both governance and QA controls.

At a high level, QA focuses on several critical areas, including:

- Fairness and Bias: QA checks that models use inclusive, representative data, runs bias tests before and after deployment, and monitors outputs to prevent drift.

- Accountability and Performance: QA ensures systems perform as intended under real-world conditions, validating outcomes against business objectives and compliance standards.

- Transparency and Explainability QA confirms decisions remain clear and auditable throughout the lifecycle by performing explainability checks (for example, verifying why a model made a prediction) and other reviews. These checks help stakeholders not only understand and trust AI outcomes but also defend them when challenged.

- Security and Robustness: QA tests for vulnerabilities and ensures models remain stable and trustworthy under changing conditions.

What’s Next: QA’s Transformation in the Age of AI

Strong AI governance is more than a set of principles. It is a structured system supported by frameworks and tools that define key elements such as accountability, transparency, and compliance. Yet even the most robust governance frameworks rely on operational layer to be effective. That’s where Quality Assurance (QA) plays a pivotal role.

QA reinforces governance by validating data quality, monitoring model behaviour, and enforcing fairness checks. It ensures policies are applied consistently across the AI lifecycle, creating a closed loop of oversight and improvement.

This partnership redefines QA’s role. In an AI-driven world, QA can no longer be a reactive tester; it must become a proactive guardian of ethics and trust.

In our next blog, we’ll dive into this evolution: how QA shifts from simple defect detection to a strategic driver of responsible AI.