Quality at Every Stage: Embedding QA Across the AI Lifecycle

Article written by Dr. Asma Zoghlami

AI promises innovation, but without embedded quality assurance (QA) and governance at every stage, it can quickly become a source of risk rather than value. Organisations often discover too late that gaps in assurance and oversight have led to inconsistent results, compliance failures, and reputational damage.

The numbers tell a concerning story: 93% of organisations are using AI, yet only 7% have fully embedded governance. More than half (54%) have either no governance or haven’t scoped it at all. The result? Only 1 in 5 organisations can clearly define who is responsible for decisions at each stage of the AI lifecycle, from design to monitoring and retirement (GCAIE, 2025), (Trustmarque AI Governance Report, 2025). This isn’t just a compliance problem; it’s a business risk. Without clear accountability and lifecycle controls, essential checks like bias reviews, validation testing, and drift monitoring fall through the cracks. Organisations discover too late that gaps in assurance have led to inconsistent results, compliance failures, and reputational damage.

As highlighted in “One Size Doesn’t Fit All: Tailored QA for Different AI Systems,” the nature of AI risks shifts across system types; generative, predictive, and agentic, making a one-size-fits-all approach ineffective. This article extends that discussion by showing how governance and QA serve as guardrails across the generative AI lifecycle, ensuring transparency, accountability, and resilience at every stage.

Risks accumulate across the AI journey when controls are absent or applied too late, leading to unreliable outcomes and organisational exposure. Issues such as hidden assumptions in prompts, models deployed without proper validation, or monitoring gaps that allow drift and hallucinations to grow unchecked are not minor oversights, they are critical gaps that leave AI vulnerable. Embedding governance and QA from the very beginning, and maintaining them consistently across the lifecycle, is the only way to move AI from experimental trial to a trusted, scalable asset.

This article explores how AI governance and QA provide the guardrails needed across the generative AI lifecycle. At each stage, prompt design, model selection, validation, deployment, and monitoring, we will connect common risks to the QA checkpoints that address them and highlight the outcomes these safeguards deliver. The message is clear: quality cannot be treated as a single step or a late-stage fix. It must be embedded from the start and carried through every phase of the lifecycle, ensuring AI remains transparent, accountable, and resilient.

💡 The Cost of Lifecycle Gaps

When governance and QA aren’t embedded across the AI lifecycle, organisations face:

- Silent failures: Model drift and hallucinations that go undetected until they damage trust

- Costly rework: Issues found in production that should have been caught in validation

- Compliance exposure: Missing audit trails when regulators come asking

- Delayed adoption: Teams hesitant to scale AI without confidence in its reliability

- The pattern is consistent: organisations that treat QA as a single checkpoint rather than a continuous process pay for it later whether in budget, reputation, and trust.

Why 93% of Organisations Are at Risk

Governance isn’t just a compliance exercise; it’s the foundation of trust and accountability.

Recent data reveals the scale of the governance gap. Research from the Global Council on AI Ethics (GCAIE, 2025) shows that only 1 in 5 organisations clearly define who is responsible for decisions at each stage of the AI lifecycle, from design to monitoring and retirement.

Our own research reinforces this finding: 19% of organisations report no clear ownership of AI governance, and responsibility is fragmented across IT, legal, compliance, and data teams with no coordination (Trustmarque AI Governance Report, 2025). The result? Over 60% of organisations deploy AI systems without structured risk assessments or lifecycle controls (GCAIE, 2025).

Even more concerning: only 8% have fully integrated AI governance into their software development lifecycle, and most describe their approach as either ad hoc or fragmented across teams (Trustmarque AI Governance Report, 2025).

Taken together, these findings reveal a widespread issue: governance gaps are not limited to unclear accountability; they extend to missing checks and controls at critical stages of the AI lifecycle. In the absence of clear responsibilities and defined controls, safeguards are missing and risks such as bias, compliance breaches, and performance drift go unmanaged. For companies, the impact is clear: undefined accountability weakens trust, slows adoption, and exposes organisations to regulatory and reputational harm.

These gaps highlight why governance cannot be treated as a one‑time compliance exercise. It must operate as a continuous framework, shaping decisions and QA actions at every stage of the AI lifecycle.

Closing this gap requires more than assigning roles; governance must provide guardrails that support each stage of the lifecycle. Those guardrails unlock the full value of quality assurance. Next, we’ll examine how they translate into practical QA checkpoints across the generative AI lifecycle.

Five Stages Where AI Projects Fail (And How to Prevent It)

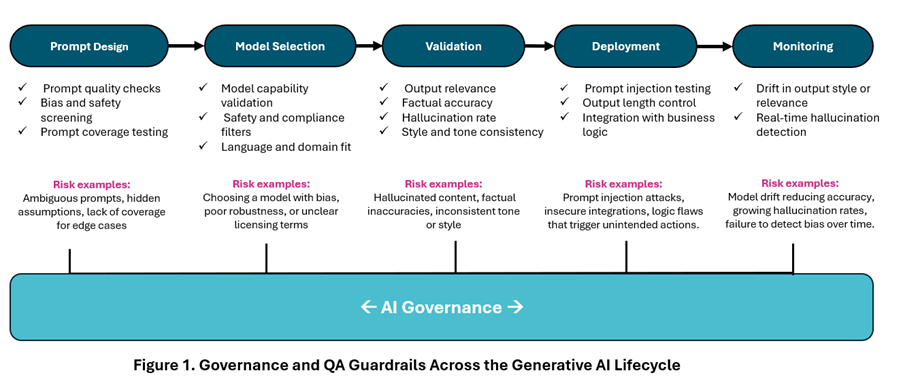

Generative AI systems progress through five core stages: prompt design, model selection, validation, deployment and monitoring. Each stage introduces unique risks and requires well-defined roles, responsibilities, and governance checkpoints to ensure compliance, safety, and performance. These checkpoints act as guardrails, keeping decisions consistent and ensuring essential checks are not skipped. Without them, vulnerabilities cascade across the lifecycle: bias goes undetected, compliance gaps emerge, and performance drifts without correction.

To understand why these checkpoints matter, consider the risks at each stage:

- Prompt Design: ambiguous prompts or hidden assumptions lead to misaligned outputs.

- Model Selection: Choosing a generative AI model without evaluating bias, robustness, or licensing causes compliance and ethical failures.

- Validation: Skipping checks allows hallucinations and factual errors to slip through.

- Deployment: Vulnerabilities such as prompt injection, logic flaws, or insecure integrations compromise system integrity.

- Monitoring: Without oversight, models drift, hallucinations increase, and bias reemerges.

Your Lifecycle QA Roadmap: From Design to Monitoring

Figure 1 below illustrates how governance and QA guardrails work together across the generative AI lifecycle. At each stage, from prompt design through to monitoring, the diagram shows three critical elements: the specific QA checks needed (green checkmarks), the risks those checks prevent (highlighted in pink), and how they connect to create continuous assurance.

This isn’t theoretical. Each checkpoint represents a moment where organisations either catch potential failures or allow them to cascade into the next stage. Use this as a reference guide to identify where your current processes have gaps.

While these checkpoints represent the standard lifecycle, enterprise-ready platforms can accelerate assurance. Solutions like IBM watsonx.governance provide model registries, automated bias detection, and audit trails, whilst Microsoft Copilot embeds security and compliance controls that reduce risk at deployment.

These tools support lifecycle governance, but they don’t replace the need for validation and outcome checks, which remain essential to ensure compliance and business alignment. Learn more about our AI testing and governance approach.

To turn these risks into actionable quality assurance, organisations need clear controls that link each lifecycle stage to specific QA actions, and the business results they deliver. The below shows how risks at every stage translate into targeted QA measures, and the impact of getting them right.

Prompt design

Challenge:

Poor prompt quality leads to inconsistent responses and misaligned results

(Risks: ambiguous prompts, hidden assumptions, lack of coverage for edge cases)

QA Actions:

- Prompt quality audits to ensure clarity and completeness

- Bias and safety screening to prevent harmful content

- Coverage testing to confirm all scenarios are addressed

Business Impact:

Reduces risk of harmful or irrelevant responses; delivers high-quality results aligned to objectives.

Model Selection

Challenge:

Choosing the wrong model can cause compliance and performance issues.

(Risks: bias, poor robustness, unclear licensing terms)

QA Actions:

- Capability benchmarking against business needs

- Safety and compliance filters for regulatory alignment

- Domain and language fit analysis for contextual accuracy

Business Impact:

Ensures the model aligns with business and regulatory requirements.

Validation

Challenge:

Generated content lacks accuracy and contextual relevance.

(Risks: hallucinated content, factual inaccuracies, inconsistent tone or style)

QA Actions:

- Accuracy checks to validate factual correctness

- Hallucination monitoring to detect fabricated content

- Style and tone consistency reviews for brand alignment

Business Impact:

Builds trust in AI-generated content and ensures relevance.

Deployment

Challenge:

Vulnerability to prompt injection and logic errors.

(Risks: insecure integrations, logic flaws triggering unintended actions)

QA Actions:

- Injection testing to block malicious prompts

- Output length control and format enforcement (e.g., limit response size and apply structured formats like JSON for API integration)

- Integration validation to ensure safe interaction with business logic (e.g., data exchange between the AI and other components is secure, no unintended actions triggered in connected applications, etc.)

Business Impact:

Secure and reliable AI in production environments.

Monitoring

Challenge:

Drift and hallucinations over time.

(Risks: growing hallucination rates, failure to detect bias, performance degradation)

QA Actions:

- Drift detection to track performance degradation

- Real-time hallucination alerts for proactive correction

Business Impact:

Continuous assurance of accuracy, fairness, and compliance.

Although this article focuses on generative AI, it’s important to note that governance frameworks also establish guardrails for predictive and agentic AI systems. These are not discussed here but follow similar principles of lifecycle assurance.

What’s next: From Lifecycle Controls to Trusted AI

Embedding QA and governance across the Generative AI lifecycle isn’t just about reducing risk, it’s about enabling scale, accelerating adoption, and ensuring compliance while delivering consistent business value. Organisations that treat governance and Quality Assurance as a strategic enabler, rather than a checkbox, elevate AI from a high-risk trial to a reliable, value-generating asset.

Start mapping accountabilities and embedding QA checkpoints today, because trust in AI begins with governance.

In the next article, we will explore Building Trust in AI: How QA Makes It Happen, focusing on why trust is critical and the key factors that enable it.

To discuss this topic in person with Dr Asma Zoghlami, join our AI Breakfast Briefing on 22 January 2026 at The Wolseley City, London.

If you can’t join us, register for our AI workshop so we can help you design the right AI governance and QA strategy for your needs.