One Size Doesn’t Fit All: AI Governance and Tailored QA for Different AI Systems

Article written by Dr. Asma Zoghlami

In 2024, Deloitte Australia was commissioned to produce a 237-page welfare compliance review for the Australian government – a case that exposed serious gaps in AI governance. The report included fabricated citations and even a fake legal quote – clear signs of generative AI hallucination. Deloitte later confirmed that a generative AI tool had been used during drafting and agreed to refund part of its AU$440,000 fee.

This wasn’t a failure of AI technology. It was a failure of AI governance and quality assurance. Hallucinations are a known risk of generative AI, yet the AI governance checks needed to catch fabricated references weren’t in place. The incident highlights a critical problem: organisations are applying generic QA approaches to AI systems that fail in fundamentally different ways.

In our previous article, From Bug Catcher to Behaviour Guardian, we explored how QA and AI governance must evolve for AI systems. Now we face the next challenge: Can one QA approach really work for AI that writes content, predicts trends, and makes decisions on its own? The reality is that assurance and AI governance cannot be applied uniformly.

Generative models, predictive systems, and agentic AI each carry distinct risks and demand tailored QA and AI governance strategies. From chatbots to forecasting engines to autonomous agents, this diversity of use cases exposes the limits of a “one size fits all” mindset and points out why specialised AI governance and assurance approaches are essential.

This article explains why these differences matter and details how AI governance and assurance strategies must adapt to the distinct characteristics of these systems.

Why Tailored QA Matters

Organisations face two critical challenges with AI quality assurance and AI governance. First, many are being told to “test AI like regular software” – an approach that provides false security whilst missing AI-specific risks entirely. Second, even when organisations recognise AI needs different QA and governance, they often apply the same approach across all AI projects, from chatbots to fraud detection to autonomous agents. Both approaches waste budget testing the wrong things whilst leaving real risks unchecked. To understand why, we need to examine how AI systems fundamentally differ.

AI is not a single technology; it includes distinct categories of systems, each with unique objectives, learning methods, and assurance and AI governance needs. To understand why QA cannot be one-size-fits-all, we need to examine the dimensions that shape how these systems operate. These dimensions are not abstract; they directly influence risk profiles and dictate where assurance and AI governance efforts must concentrate.

Five AI Dimensions Shaping QA and AI Governance Needs

We’ve developed a framework that maps five key dimensions of AI systems to their quality assurance and AI governance requirements. These dimensions reveal where risks arise and where QA and AI governance must focus their efforts.

Objectives & Decision Scope

What it means:

What the system does (create content, predict outcomes, execute actions)

QA Impact:

Defines where failures show up, what gets damaged, and which governance controls must be in place

Interaction Style

What it means:

Prompt-driven vs continuous operation

QA Impact:

Determines exposure to risk and error frequency, and how governance controls are applied in real time

Learning Approach

What it means:

Periodic retraining vs real-time adaptation

QA Impact:

Affects behaviour predictability and the cadence of governance reviews

Adaptability

What it means:

How and when the system changes

QA Impact:

Influences monitoring requirements and triggers for governance intervention

Accountability

What it means:

How traceable decisions and actions are

QA Impact:

Shapes oversight, auditability, and compliance needs

Let’s examine each dimension in detail.

- Objectives and decision scope (impact area): Generative models focus on creating new content, predictive models on forecasting outcomes, and agentic systems on autonomous control and goal execution. These objectives define what the system impacts and which AI governance controls are required. For instance, a customer support chatbot primarily impacts communication quality and information accuracy, so errors show up in clarity, tone, misleading content, or user satisfaction. In contrast, an agent like a travel booking assistant impacts execution quality and outcome reliability, with errors showing up in how well actions are carried out and directly altering bookings, payments, or schedules.

- Interaction style (exposure to risk): Systems may act only when prompted or operate continuously. Continuous operation in autonomous systems increases their exposure to risk and raises the bar for effective AI governance. For example, a customer support chatbot interacts only when a user sends a query, so errors are limited to isolated conversations. In contrast, an autonomous travel booking agent operates continuously, monitoring prices and making bookings in real time, which means errors can occur at any moment and directly affect customer transactions.

- Learning approach (Behaviour predictability): This dimension looks at whether the system learns in fixed cycles (through retraining) or adapts continuously. Generative and predictive systems retrain periodically, so behaviour is relatively stable between updates. Agentic systems adapt in real time, making autonomous decisions, which reduces predictability because new behaviours can emerge at any moment. For example, a fraud detection model retrained monthly behaves consistently within a cycle, while a travel booking agent adapts instantly to changes such as price, user preferences, or availability, so its behaviour can shift at any time. AI governance policies need to define how such learning is reviewed and controlled.

- Adaptability (changeability): Generative and predictive models typically evolve through retraining or fine‑tuning, whereas agentic systems may adjust autonomously as they execute goals in dynamic contexts. More changeability means behaviour can shift unexpectedly, increasing the need for ongoing checks. A chatbot changes only after scheduled retraining, so its behaviour is predictable between updates. The travel agent adapts instantly to price changes or cancellations, which means behaviour can shift unexpectedly and errors can directly affect bookings, requiring close observation of changes and clear AI governance rules about acceptable behaviour.

- Accountability (control and Traceability): Generative and predictive systems rely on clear documentation of training and deployment decisions, making outputs easier to trace. Agentic systems introduce complexity because autonomous actions can affect safety or compliance, requiring strong AI governance and oversight. For example, a chatbot’s responses can be traced back to prompts and training data, while a travel agent autonomously makes bookings, creating accountability challenges when errors affect financial transactions or regulatory compliance.

These dimensions show where risks arise, and where QA and AI governance need to focus.

Tailored assurance ensures that controls align with system characteristics and the risks they pose, so reliability, fairness, and safety are not compromised. The importance of tailoring goes beyond recognising variation; it is about addressing the vulnerabilities those variations create. Differences in objectives, learning styles, and adaptability generate distinct risks, and these risks determine where QA and AI governance must concentrate. Targeted assurance is effective precisely because it is grounded in understanding risk.

Risk Profiles Across AI Types

Different AI systems introduce distinct vulnerabilities that shape where Quality Assurance (QA) and AI governance must focus. These risks affect reliability, fairness, and safety.

QA has always been risk-driven, prioritising effort where failure would have the greatest impact. With AI, this principle becomes even more critical as AI behaviour is driven by changing data, evolving models, and autonomous decision‑making, which increase uncertainty and amplify risk. Generic checks are not enough; assurance and AI governance must be tailored to risk exposure. If these risks are not understood and managed, they can result in compliance failures, operational harm, and reputational damage.

Generative AI: Key Risks

Generative AI brings powerful capabilities for creativity and automation, but it also introduces risks such as:

- Hallucinations: Outputs that sound convincing but are incorrect, misleading, or fabricated.

- Bias and Discrimination: Expressions or representations that reflect unfair stereotypes from training data.

- Irrelevance: Content that is grammatically correct but off topic or not useful for the intended purpose.

- Prompt Injection: Malicious or manipulated inputs that trick the system into producing unsafe, unintended, or harmful outputs.

These risks directly affect customer trust, regulatory compliance, and AI governance obligations. As the Deloitte incident demonstrates, a single hallucination failure can result in six-figure costs and reputational damage.

Predictive AI: Key Risks

Predictive AI supports decision-making by estimating future outcomes from data patterns. While valuable, it also presents risks including:

- Model Drift: Accuracy declines as input data changes over time, leading to unreliable predictions.

- Biased predictions: Unequal or unfair results caused by imbalanced or incomplete datasets.

- Auditability challenges: Limited transparency or explainability, making it difficult to validate or trust predictions.

- Poor Data Quality: Inaccurate, incomplete, or inconsistent input data that leads to unreliable or misleading outputs.

These risks often emerge gradually, making them harder to detect. By the time accuracy decline becomes obvious, significant business decisions and AI governance commitments may already be compromised.

Agentic AI: Key risks

Agentic AI operates autonomously to achieve defined goals. It poses risks like:

- Decision Safety: Actions that may compromise safety or conflict with ethical boundaries.

- Autonomy Risks: Critical decisions made without sufficient human oversight or intervention.

- Goal Misalignment: Objectives that diverge from organisational priorities or fail to reflect user intent.

- Coordination Failures: Conflicts or inefficiencies when multiple agents act independently without proper alignment.

With autonomous systems, the stakes are highest. Without proper safeguards and robust AI governance, a single unsafe decision can cascade into operational failures or regulatory breaches.

These examples of risks show that vulnerabilities are not uniform; they vary depending on how AI is used. Alongside these differences, all AI systems share common challenges. They can be exposed to security weaknesses where systems are attacked, corrupted, or manipulated; they may be misused in harmful or noncompliant ways; and they often face performance limits, failing or slowing down under heavy load. Recognising both individual and shared risks is critical. It ensures QA and AI governance effort is directed where assurance delivers the greatest impact.

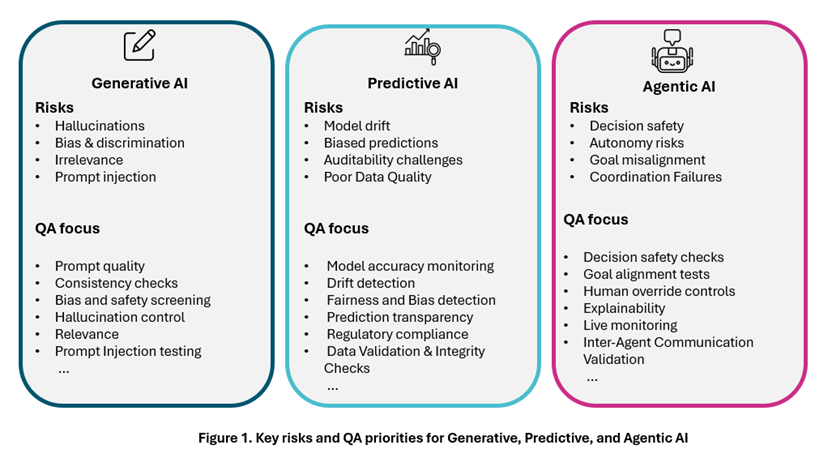

QA Focus Areas: Targeting AI Risks

Quality Assurance must align its activities with the vulnerabilities identified above. Standard and repetitive checks are insufficient; assurance must be risk driven and precisely targeted. Figure 1 offers a quick reference to the key risks and QA priorities for each AI type, supporting the detailed focus areas that follow.

The following sections outline practical QA measures tailored to each AI type.

Generative AI – Examples of QA and AI governance focus areas

To keep outputs reliable and safe, QA and AI governance often prioritise checks such as:

- Prompt Quality: Ensuring inputs are clear, well-structured, and free of ambiguity that could mislead the system. QA reviews prompt design and test variations to confirm the model interprets inputs consistently, guided by AI governance standards.

- Consistency Checks: Verifying that responses remain stable and coherent across similar queries. Repeated prompts with slight variations are run to ensure outputs do not drift or contradict.

- Bias and Safety Screening: Detecting and preventing discriminatory, offensive, or harmful content in generated outputs. Automated checks and targeted evaluations are applied to confirm responses meet ethical, safety, and AI governance requirements.

- Hallucination Control: Validating that generated information aligns with trusted sources and does not invent false details. Outputs are compared against trusted sources to ensure accuracy and support AI governance obligations around reliability.

- Relevance Testing: Confirming that answers directly address user intent and avoid unnecessary or off topic information. Responses are assessed to confirm they remain focused on the query and provide useful detail.

- Prompt Injection Testing: Ensuring resilience against malicious or manipulative inputs. QA simulates unsafe or deceptive prompts to verify that the system blocks or neutralises them effectively, in line with AI governance policies.

Predictive AI – Examples of QA and AI governance focus areas

To keep outputs reliable and safe, QA and AI governance emphasise checks such as:

-

Model Accuracy Monitoring: Predictions must remain precise and aligned with performance benchmarks. Accuracy is tracked against validation datasets and monitored over time to confirm consistent quality.

-

Drift Detection: Over time, data patterns and behaviours can change, reducing reliability. Ongoing monitoring detects these shifts early so models can be recalibrated before performance declines, as required by AI governance controls.

-

Fairness and Bias Detection: Predictive models must deliver equitable outcomes across groups. Outputs are reviewed across demographic segments and edge cases to ensure fairness is maintained under AI governance and regulatory expectations.

-

Prediction Transparency: Predictions should be traceable and understandable. Documentation and communication make it clear how inputs and model logic lead to the final result, supporting explainability goals in AI governance.

-

Regulatory Compliance: Outputs must meet legal and industry requirements. Compliance frameworks are applied, and evidence is documented to demonstrate adherence during audits as part of AI governance.

-

Data Validation and Integrity Checks: Input data must be accurate, complete, and free from anomalies. Integrity checks and validation processes confirm data reliability before predictions are generated.

Agentic AI – Examples of QA and AI governance focus areas

To ensure outputs remain reliable and safe, QA and AI governance apply a range of checks, including:

-

Decision Safety Checks: Ensuring autonomous decisions remain within defined safety boundaries and do not trigger harmful actions.

-

Goal Alignment Tests: Confirming agent behaviour consistently aligns with intended objectives and avoids unintended outcomes, as defined in AI governance policies.

-

Human Override Controls: Ensuring operators can override agent actions when necessary. QA confirms override features are properly built in, accessible only to authorised operators, and tests that override inputs immediately restrain or adjust operations, including under failure scenarios such as unsafe decisions or agents stuck in loops.

-

Explainability: Ensuring agent decisions and resulting actions can be clearly interpreted and presented with a transparent flow of reasoning to support trust and accountability. QA checks include verifying that decision logs capture the reasoning steps, testing whether outputs and actions can be traced back to inputs, and reviewing whether the logic driving decisions and actions is transparent and clear across stakeholder groups, in line with AI governance standards.

-

Live Monitoring: Continuously tracking agent activity to detect anomalies, errors, or unsafe behaviours in real time.

-

Inter-Agent Communication Validation: Confirming that interactions between agents remain accurate, secure, and free from miscommunication or manipulation.

What’s next: Building Quality and AI governance into the AI lifecycle

Generative, predictive, and agentic AI each introduce unique risks, so QA cannot be one-size-fits-all. Tailored assurance is essential, but it’s only part of the solution. Quality must be embedded from design through deployment, supported by AI governance and continuous oversight.

In our next article, we’ll examine how lifecycle checkpoints strengthen assurance by embedding quality and AI governance controls at every stage. These checks ensure risks are managed early, governance is maintained, and systems remain reliable and compliant as they evolve.

To discuss this topic in person with Dr Asma Zoghlami, join our AI Breakfast Briefing on 22 January 2026 at The Wolseley City, London.

If you can’t join us, register for our AI workshop so we can help you design the right AI governance and QA strategy for your needs.